Just recently there was a post on social media showing two orcas in the shallows of a beach in Benajarafe, near where I live. It was a wonderful sight, and something that I happily accepted because dolphins have been seen there as well—dolphins and orcas are often seen in the Mediterranean near us. A few days later, someone put up a similar post, but this time it was tigers in the beach. Same photo different animal. They declared the first one was a fake. Personally I don’t think it was, but it made me realise how easy it would be to feed people false information. And that brings me to the fear of AI.

When I first heard people talking about AI, I was concerned how it was going to affect writers and students. A friend explained how easy it was to get ChatGPT and how it would write whatever it was asked to. He tried to demonstrate it by asking it to give a review of one of my books; it produced a review which had nothing to do with my book, except the title. (I’m obviously not as famous as I thought I was.) This of course illustrates one of the real drawbacks with ChatGPT, if it can’t find the information it requires it produces something which may be similar and presents it as fact. According to OpenAI, the inventors of ChatGPT, apparently this is not uncommon amongst ‘large language models’ such as this, and is called hallucination. I decided I ought to find out more about this rapidly changing new technology, its benefits and its negative aspects, our fears and whether they need to regulate the technology.

ChatGPT—Generative Pre-Trained Transformer—was first released in November 2022 and by January 2023 it had over 100 million users. Then a more stable version was released on 24th May 2023, just two weeks ago! Since then it has been a hot topic of conversation. OpenAI have made it free to use, so at the moment it is a non-profit AI tool, but I can’t imagine that it will remain that way for long. There are many alternatives to ChatGPT, Microsoft-Bing and Chatsonic, for example, and basically they are all reinforced learning derived from human feedback.

So what is being done to control and monitor this software? AI development is in a race to be the best and the biggest, and it’s driven by profit, despite what OpenAI say about it being free to use. There are ethical and moral concerns about a technology with such power and many of the legal ramifications to it have not been made clear yet.

I said my first concerns were about education and authors. Turritin AI detection technology is designed to keep abreast of AI development and it can be used to check students’ work to establish whether AI has been used and how. But what about authors? AI systems are trained by using existing writing, with no regard for copyright and there are also infringements of their moral rights of integrity, data protection laws, invasion of privacy and the downright theft of their work. Already AI generated ebooks and stories are being submitted to publishers. Audiobook voice actors have had their voices replicated without permission. Even people within the high-tech industry, such as Elon Musk and Steve Wozniak are calling for developers of AI systems to slow down on developing even more powerful systems until there is greater control and any risks are manageable.

The Society of Authors recently sent out a newsletter about the need for the government to protect the rights and interests of authors and other creative professionals, insisting that anything produced by AI should be labelled as such, and the works created by humans that are used by AI should be acknowledged and paid for.

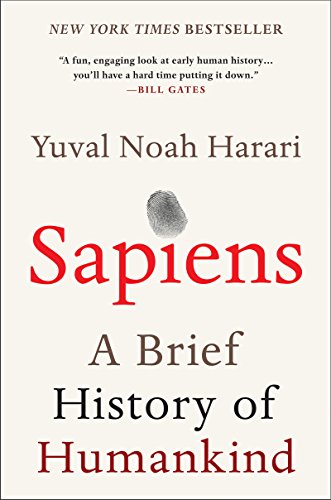

Some voices make even stronger arguments for the need to beware. Yuval Noah Harari, the author of the book about humankind, Sapiens, has recently written about his fears regarding AI. His main premise is that all human culture is based on language, from the myths and scriptures of the past to the stories and democracy of the present. If that language is generated by a machine, how are we to know what we can trust. He too emphasises the need to have strict regulation on the use of AI before it is too late to control it. I know, it is all beginning to sound like a scene from a science-fiction film, butI remember when I was a teenager, reading the stories of Ray Bradbury—there was a man with a vivid imagination—and thought them incredible. In one story there was a television screen that covered a whole wall and people could interact with it! Who could ever have imagined that. The world is changing at a speed which was unimaginable when I was young, and it still is. This is not necessarily a bad thing as long as we are in control. The use of AI has to be revealed in all cases. As Yuval Noah Harari says, “If I am having a conversation with someone and I cannot tell whether it is a human or AI, that’s the end of democracy.“

This blog was written by a human, and I hope the information I have gathered from the internet was also!